AI Factory or AI Ready Data Centre? The Name Doesn’t Matter – Execution Does

Matt Salter, Head of Data Centres at Onnec

Across the data centre industry, organisations are racing to build environments capable of supporting increasingly powerful GPUs – and the enormous compute demands that come with them.

New terminology has emerged alongside this shift. Vendors like Nvidia and hyperscalers such as AWS are increasingly using the term “AI factory” to describe a new generation of purpose-built facilities designed to orchestrate the entire AI lifecycle under one roof.

But the terminology itself is less important than the transformation happening beneath it.

Whether it’s an AI factory or an AI-ready data centre, these facilities represent a fundamental shift in how infrastructure is designed, deployed and operated. The demands of large-scale AI workloads are rewriting traditional assumptions about cooling, networking, deployment timelines and workforce capability.

For operators competing in this AI economy, success depends on how effectively they can respond to four structural factors now reshaping the sector:

1. Infrastructure requirements are fundamentally changing

AI workloads are placing unprecedented pressure on data centre environments.

GPU clusters generate extraordinary levels of heat density, pushing conventional cooling approaches to their limits. Air-based cooling systems that once comfortably supported enterprise compute environments are increasingly insufficient for high-performance AI deployments, meaning liquid cooling is rapidly moving from optional capability to baseline requirement.

A similar shift is taking place in networking. High-performance AI workloads rely on ultra-low-latency connectivity between GPUs, with technologies such as InfiniBand enabling the scale and speed required for modern training clusters. These architectures require different design principles, specialised cabling strategies and engineers with highly specific expertise.

At the same time, infrastructure components are becoming tightly interconnected. Cooling, cabling, rack configuration and airflow must now be designed as a single integrated system.

Achieving AI readiness today means far more than installing GPUs within an existing footprint. In many cases, it requires rethinking the environment in which those systems operate.

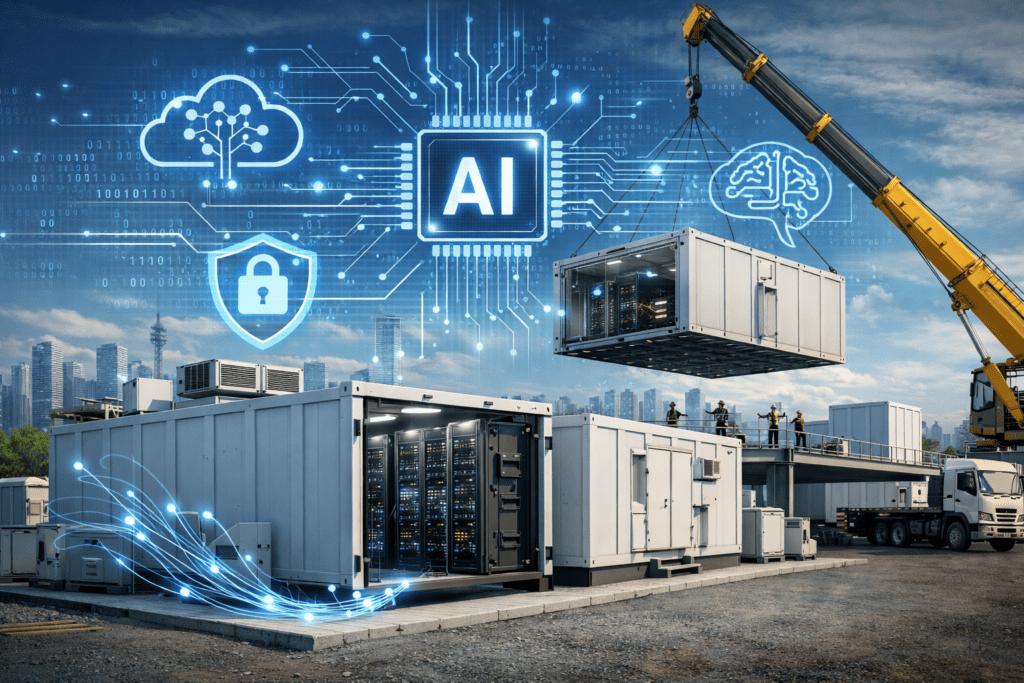

2. Speed has become the dominant commercial driver

Traditional data centre projects often unfolded over extended timelines, with long planning cycles, detailed procurement processes and carefully staged deployments.

AI infrastructure operates on a very different schedule.

Hyperscalers and AI-as-a-service providers are investing billions to expand compute capacity, and the pressure to operationalise that infrastructure quickly is intense. Once capacity is secured, deployment timelines can compress dramatically, with projects moving from planning to execution in weeks.

The key commercial question is no longer simply “Who is cheapest?” but “Who can get us live safely and fast?” The ability to mobilise quickly and deliver reliably under compressed timelines has become more valuable than marginal cost savings.

The stakes are amplified by the value of the hardware involved. GPU clusters represent immense capital investment, and supply constraints mean installation delays can carry significant commercial consequences.

3. Deployment has become a choreography exercise

Delivering high-density AI environments requires careful coordination between multiple interdependent elements.

Hardware delivery schedules must align with site readiness. Cooling systems must be operational before GPU installations begin. Specialist cabling and networking infrastructure must be installed with precision and at the right stage of the build.

At the same time, many organisations are rolling out AI capacity across multiple facilities and regions simultaneously.

This creates tightly choreographed deployment programmes where delays in one area can cascade across the entire project. Installation teams may receive confirmation only weeks before mobilisation, while infrastructure upgrades must be completed within narrow windows to align with hardware availability. Successful AI deployments depend on synchronising infrastructure, hardware, supply chains and specialist teams so they move together with precision.

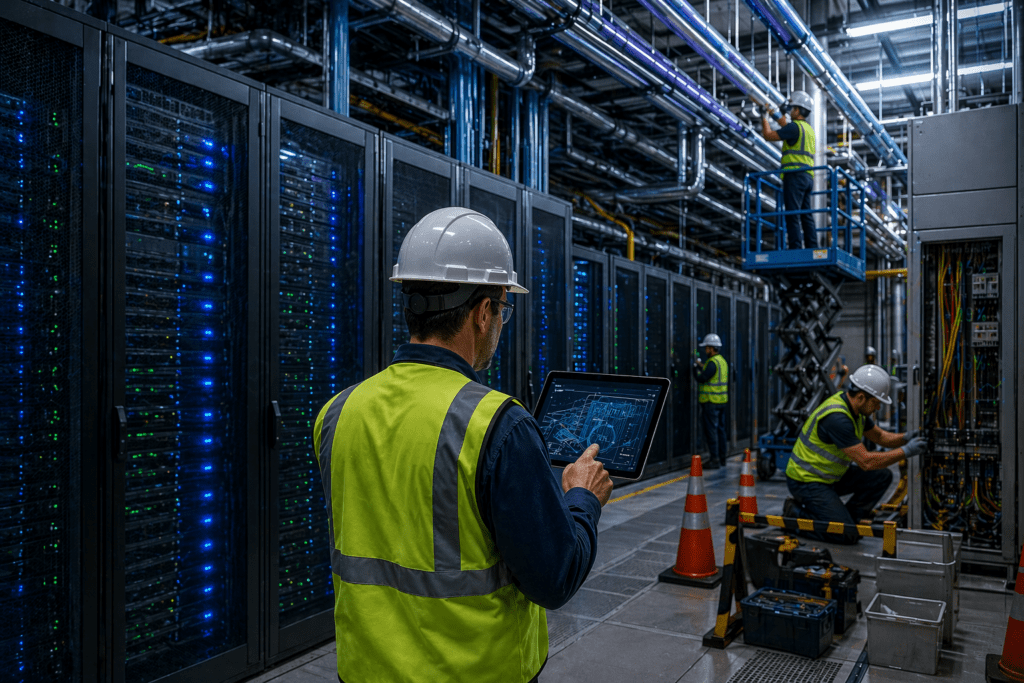

4. Specialist skills are becoming a critical constraint

Beyond infrastructure and logistics, another challenge is emerging skills.

AI networking environments such as InfiniBand require technical expertise that many infrastructure teams are still developing. Deploying high-density GPU environments also introduces new levels of complexity and risk compared with traditional CPU deployments.

At the same time, demand for these capabilities is expanding rapidly as countries compete to host new AI infrastructure.

Delivering high-density deployments at scale therefore requires not only experienced engineers but deep resource capacity – teams able to mobilise quickly, operate across markets and maintain consistent standards under pressure.

Organisations that invested early in developing these capabilities now hold a clear advantage. Others are racing to build the expertise required to support the next wave of AI infrastructure expansion.

Why operators can’t afford to go it alone

AI infrastructure demands new approaches to design, deployment and coordination. Projects are moving faster, technical requirements are becoming more complex, and specialist expertise remains relatively scarce.

In an environment where mistakes can be amplified by the scale and value of AI hardware, the role of experienced partners becomes increasingly important.

Organisations with deep expertise in high-density networking, liquid cooling integration and large-scale infrastructure mobilisation are no longer simply supporting deployments – they are helping enable them. Their ability to anticipate bottlenecks, coordinate supply chains and deliver reliably under compressed timelines can directly influence how quickly AI platforms reach operational scale.

Whether described as an AI factory or an AI-ready data centre, the terminology is secondary. What ultimately matters is execution – ensuring the infrastructure works technically, operationally and commercially.

Read more in our free Viewpoint